Our designed reality

Two years late to the party, I discovered the brilliant review paper “Machine Behavior” by Iyad Rahwan and an all-star cast. Its premise is that we need to let systems relying on artificial intelligence be scientific study objects in their own right, even though they are, to some extent, engineered. The article opens with a quotation from Herbert Simon’s The Sciences of the Artificial, which feels right (Simon was a co-founder of artificial intelligence). However, I thought there must be more apt Simon quotations, which prompted me to re-leaf through Simon’s works and ponder over his lasting impact on today’s hot topics. Indeed, it feels like the authors interpret “artificial” in Simon’s title as equal to what it means in the phrase “artificial intelligence” today, which is not precisely what he intended.

The Sciences of the Artificial is one of my favorites of Simon’s works [1] and a polymathic tour-de-force. Not only did Simon connect seemingly disparate disciplines, he also managed to make readers from all these different backgrounds feel like he was talking to them. People have mentioned The Sciences of the Artificial as foundational for design theory, complexity science applied to the social sciences, and, now, machine behavior. Moreover, the book is not just unifying fields and connecting loose ends. It is also developing thoughts somewhat running against the mainstream currents while still convincing (over half a century after its publication).

“The artificial” in Simon’s thinking are things that are synthesized and (typically) designed and engineered by humans. Indeed the same meaning as in “artificial intelligence” back then. We can characterize artifacts by their functions, goals, intended environment, and adaptations to the actual environment. Since our everyday life is a stream of interactions with such objects and since they require a different type of understanding than natural phenomena, the sciences of the artificial are needed and inevitably different from the natural sciences.

The thesis is that certain phenomena are “artificial” in a very specific sense: they are as they are only because of a system’s being molded, by goals or purposes, to the environment in which it lives. If natural phenomena have an air of “necessity” about them in their subservience to natural law, artificial phenomena have an air of “contingency” in their malleability by environment.

(Preface of the 3rd ed.)

This might not be so controversial in many fields (operations research, organizational theory, management science, etc.). Still, it goes against one of the tenets of my areas—to understand and explain everything human-made by methodologies from natural science. A common truism is that some technologies nowadays have reached the complexity of biological systems, with the apparent corollary that we should understand them as such. In my reading, the main take-home message of The Sciences of the Artificial is that we need to study artifacts as designed, which sets the social sciences apart from the natural ones.

Isn’t everything just biology?

In the social data sciences of our time—including the machine-behavior review by Rahwan et al.—biological analogies reign supreme. It would be hard even to find words without them. Is it still meaningful to understand our algorithmic world as designed? Maybe time caught up with the forever trailblazing Simon.

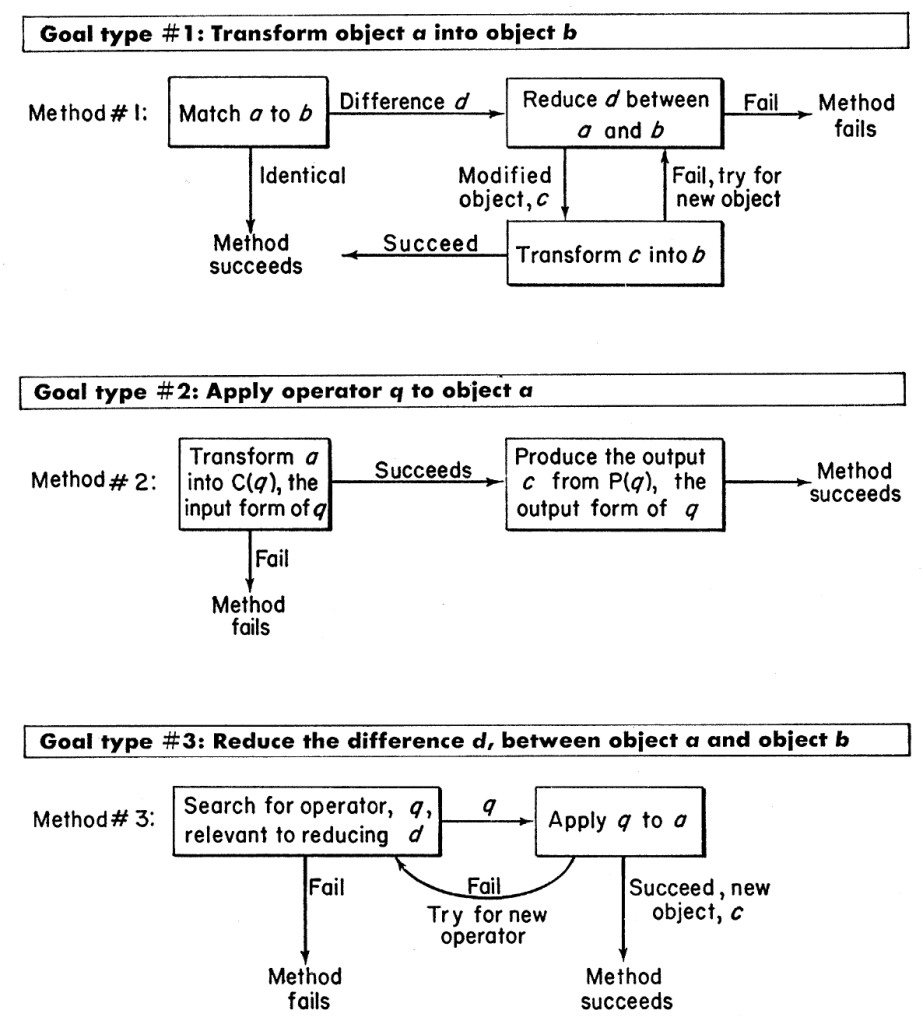

In his memoirs, Simon called searching for the “truth about human decision making” the “clearest theme” of his scientific career. Even if his writing is exceptionally diverse, there is always some individual or organizational decision to be taken somewhere in the background. Accordingly, Simon’s model of human thinking was a procedural computer program that identifies the situation, goals, and possibilities and eventually decides on an action. [2]

Even today, people propose analogies to computer programs to explain cognitive processes. However, the choice of programs follows the fashion—today, they are no longer presentable as flowcharts of boxes and arrows but as deep-learning language models like GPT3. [3] Since computing paradigms emulate cognitive processes, such reasoning is somewhat circular. Still, it could be helpful as proofs-of-concept or for hypothesis discovery.

Going back to the more general issue of basing sciences of the artificial on biology, I think the immediate problem is what we can expect to get out of the analogies we are making. It is easy to map one system to another, but if there are no deeper insights to be brought back to the original question, what did we really gain? We may call the collection of operational bots on social media an “ecosystem,” but if the mapping is so imprecise that we cannot import either results or methods from ecology, then “system” or “botsystem” would be far more appropriate.

I think there is an obvious danger with molding today’s science of the artificial on biology. Perhaps a design-centric research agenda would be safer, or at least a good complement. On the other hand, maybe our future societies of humans and their machines are just so biological that there is no point in distinguishing the sciences of the natural and artificial. Time will tell.

Notes

[1] His article collections (Models of Man/Thought/Discovery) are very inspiring too and exceptionally readable even when they get technical.

[2] This was, of course, very much the zeitgeist. There are more spectacular examples than Simon and Newell’s papers. “Human dream processes as analogous to computer program clearance” is one such gem published in Nature. It also explains the human mind through the workings of computers, taking inspiration from dream deprivation research—also characteristic of the era. This paper was written by futurist author JG Ballard’s close friend Chris Evans and crediting conversations with JC Lilly—the dolphin-communicating icon of the 1960s lysergic counterculture.

[3] Many people have already pointed out how incomplete such an approach would be. To me, it feels like being human is more about procedural decision-making than pattern recognition, even though there are many cognitive processes out of scope for the approach of Newell & Simon. So, even though we don’t think by deep learning, I guess there won’t be a complete resurgence of procedural models of human thinking.