In my closest scientific surrounding, The Limits to Growth is surprisingly unknown, so at its 50th anniversary, this blog post is an intro + my reflections. For short, it’s a fascinating story of what happens when computational social science makes a splash.

If we interpret computational social science literally—not just meaning social media data mining—its most influential work celebrates its 50th anniversary this year. The Limits to Growth (LTG) presented the simulation of a systems dynamics model of humanity, World3 [1], and its impact on the Earth. For a combination of scientific and political reasons, it became astoundingly influential, contentious, and polarizing—with echoes lasting to this day. If contemporary computational social science aspires to improve the world, we have a lot to learn from LTG—its inception, reception, and repercussions.

When the 1960s turned into the 70s, the accelerating growth of the Earth’s population was a significant public concern. In 1968, Stanford professor Paul Ehrlich’s The Population Bomb argued that, within a few decades, starvation would increase and eventually reach the middle class of the industrialized countries. Another concern in the budding environmentalist movement was environmental pollution by toxins like DDT. In this case, too, there was a momentously opinion-making book—Rachel Carson’s 1962 Silent Spring. Yet another worry was the flip sides of economic growth (cf. Mishan’s The Costs of Economic Growth). In addition to these sentiments—during these years amid the Apollo program—science’s esteem was soaring [2], including the young field of computer simulations of society. The stage was set for a work that could combine all these elements.

LTG was a report of a task force of scientists from MIT (Meadows, Meadows, Randers, Behrens) carrying out a project “on the predicament of mankind,” looking at humanity’s future to the end of the 21st century. It came out of a gathering of thinkers (a “non-organization”)—the Club of Rome, with roots in (surprisingly) the OECD and headed by the Italian industrialist Aurelio Peccei. Indeed, reading Peccei’s foresightful The Chasm Ahead (1969), one can’t help but wonder how other members contributed as it contains most of the precepts and conclusions of LTG (but, of course, no simulations). Peccei worried about the accelerating pace of civilization [3], the technological divide, the post-war political order, and, indeed, demographics, famine, and pollution.

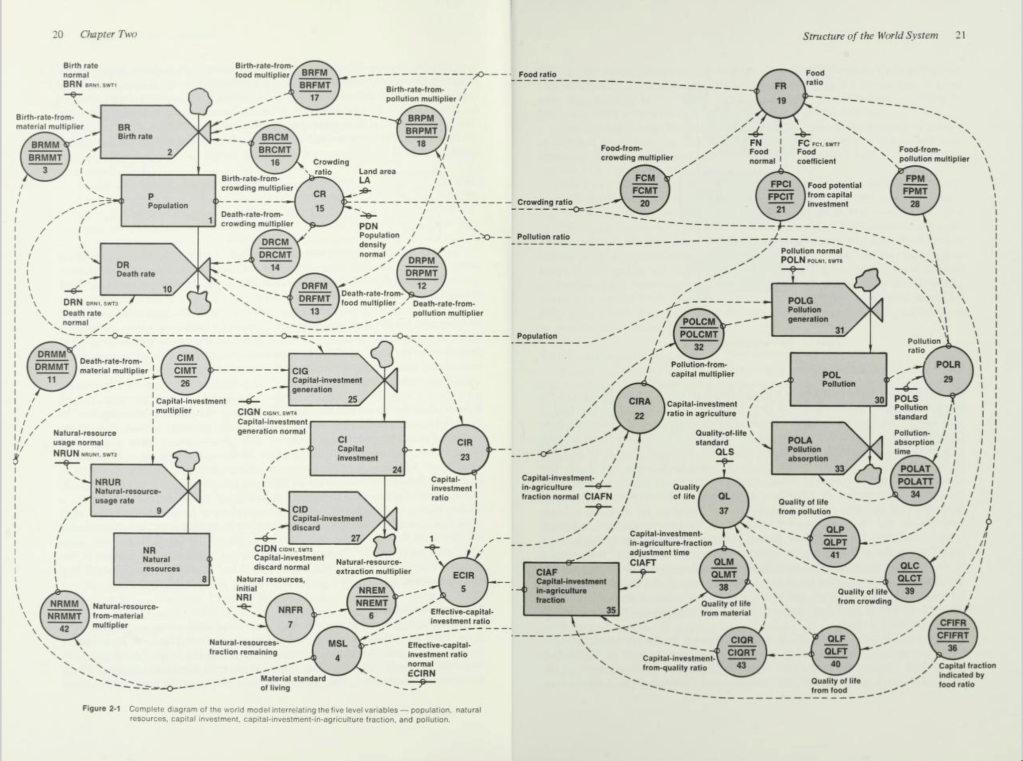

LTG’s World3 model closely followed Jay Forrester’s World Dynamics simulations [4]. The scenarios the World3 model painted were bleak. All but the most optimistic outcomes contain decades in the latter half of the 21st century where the Earth’s population would fall dramatically by increased mortality.

The debate that followed the publication is hard to fathom. In mass media, scientific conferences, documentaries, books, and whatnot, people discussed the ins and outs of World3 and the future of humanity. To get a feeling for the debate in all its varieties, I recommend Models of Doom (by a team from the University of Sussex), Modeling the World (by sociologist Brian Bloomfield), economist Julian Simon’s The Ultimate Resource, and (although not so explicitly polemic) the follow-up Dynamics of Growth in a Finite World by the original LTG team [5]. The discussions covered every aspect imaginable—from the purpose of computational models, via the stability to initial conditions, to (more recently) how LTGs predictions have stood the test of time.

Reading LTG in 2022 is a time machine transporting you back in time, not forward. To a time when the threat was famine because of overpopulation and diseases from environmental toxins, not global warming due to the greenhouse effect [6]. As a book about the future, it was a failure. Perhaps every project attempting such a long time horizon [7] is fated to become a record of the zeitgeist of its publication date. On the other hand, what failure has created more research, knowledge, and action? So, it was indeed also an intellectual triumph.

What does LTG teach present-day computational social scientists? Two still valid points unfortunately somewhat lost in this era of causal inference: 1. In a system as complex as the world, it’s insufficient to base policy arguments on simple cause-and-effect statements. They would need to be connected into graphs (systems diagrams) containing cycles (feedback loops). 2. If one can make a verbal argument for a policy, one should also make it with a simulation model. For if one can’t, then what good is the argument?

From my outsider fan perspective, what feels disheartening is when LTG is presented today without mentioning its failures or, worse, by reinterpreting it, moving the goalposts, or making excuses. It feels like a betrayal of its genuinely pioneering spirit (which should be the legacy—not the models or assumptions). Of course, the greenhouse effect was only a hypothesis in 1971. Almost everyone would probably miss that at the time. There is no shame in failing to predict the world 50 years later, but having an excuse doesn’t make something a success. We, scientists, need to take words at face value because that is what the public does.

There will be scientific books or articles that catch the public’s attention, like LTG, in the future. When and in what form, I can’t guess. Perennial cultural tropes, like the end of the world, probably help. More importantly, though, science needs a heard-earned trust from society—there are no shortcuts when improving the world.

Notes

[1] For pythonistas out there, I recommend playing with PyWorld3.

[2] As a typical example, in his Designing Freedom (p. 44), Stafford Beer said: “You probably know that it is possible by electronic simulation to make a ten-year-ahead projection instantaneously, and then to change your policy and see what difference it makes.” No disclaimers on the horizon.

[3] Including concepts like information overload, later popularized by Alvin Toffler in Future Shock. Don’t miss this film version narrated by Orson Welles.

[4] For Forrester, one reason to model the entire humanity—with its resources, environment, and economy—was to simplify the boundary problem—modeling any subsystem of society would undoubtedly increase the input and output terminals of his systems dynamics models.

[5] I haven’t got hold 1979 OECD report Facing the Future yet, which signaled a move away from the ideas of the Club of Rome. Oh, it’s here!

[6] The greenhouse effect was an unconfirmed hypothesis in the early 1970s. Still, LTG does cite Keeling curves—measured motivated by the greenhouse effect—as evidence of human impact on nature and mentions environmental heating as a future problem (but heating from dissipation of the increased energy consumption, not the greenhouse effect).

[7] Today, we have The Long Now Foundation, Longtermism, The Future of Humanity Institute, etc.